Rita Anjana – NLP Engineer – Elemendar

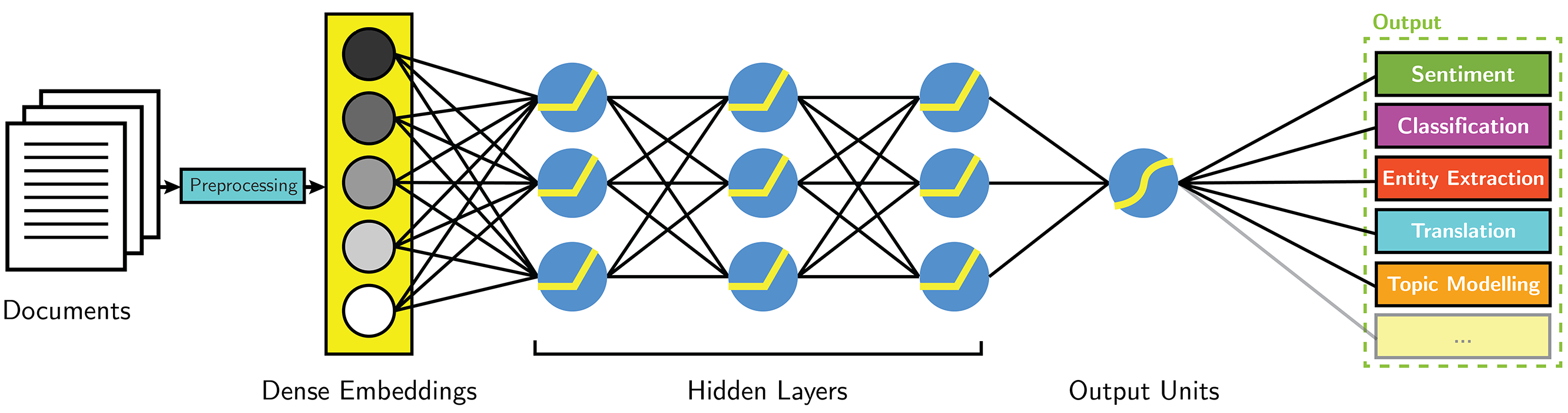

The AI Engine we are developing reads and translates a huge volume of unstructured text into structured and insightful reports(STIX), currently I am working on two key aspects: Data & the Entity Extraction model.

A machine learning model is only as good as the data it is fed, through which it learns to extract useful insights from raw text documents.

I noticed that there was a lot of scope in terms of making improvements to our data ingestion pipeline and perhaps making it more generic and easier to add new sources of data and as such I’ve been focusing on it primarily. This is essential, because if there are new data sources, such as just plain text files, pdf, scanned pdf docs etc.. Perhaps from new clients we can integrate them to our system with very minimal time, essentially it improves the speed of integration.

The other area that I’m focusing here is with respect to data standardisation, since we read data from a huge range of sources there’s a lot of different formats that we need to handle, and as such they need to be standardised and normalised once we read them in to our AI Engine – this helps us to eliminate any inconsistencies in the data, and manage it with ease.

Lastly, in case of the data ingestion pipeline, I’ve been upgrading our data ingestion system and making it parallelized which improves the speed with which we process the documents. hence, reducing the ingestion latency and improving the processing throughput.

So to summarise, with regards to data this is what I’ve been up-to:

- Currently we are trying to simplify the ingestion process, where we read data from multiple heterogeneous sources and collate them and create the raw data necessary for the ML model.

- We are also trying to make the ingestion pipeline more consistent and ensure the data integrity and reliability.

- Parallelizing the ingestion pipeline, to improve its throughput and reduce the latency.

In parallel to this I’ve been mostly doing some exploratory work on creating the Entity extraction system, and entity relationship prediction modules.

Most named entity recognition (NER) systems deal only with the flat entities and ignore the inner nested ones, which fails to capture finer-grained semantic information in underlying texts documents.

In case of nested-entities usually two different models are separately developed since sequence labelling models, which are used as a backbone for flat NER, can assign a single label to a particular token, which is not desirable for our use-case where a token can contain multiple sub-entities. the first model, identifies the first level of entity and further a second model identifies the sub-entities.

So in summary, on the modelling side, we’ve been doing these things:

- So far we’ve been exploring various ML model architectures (ex: Using transformers based LM, BiLSTM-CRF…etc) and doing some exploratory work on building robust features, from which the model can learn.

- As is the case with most real world data we also face a lot of data imbalance issues which need to be addressed, in order to improve the performance of our ML systems and as such I’m exploring various ways of doing this.

- Most of the existing models/architectures focus on this problem as a multi-class token classification problem, However in our use-case it makes much more sense to treat it as a multi-label token classification problem, as such we are researching on this, currently we are looking at using modern SOTA language models for this purpose.(Mostly we are experimenting with BERT & XLNET)

End